The phase difference between the coupled and mainline signals is critical, typically targeting 90° for ideal quadrature operation. This shift is frequency-dependent and is measured using a vector network analyzer, which precisely quantifies the phase deviation (e.g., ±5°) from the theoretical value across the specified bandwidth, such as 1-2 GHz.

Table of Contents

What is Phase Difference?

In the world of RF and microwave engineering, few parameters are as fundamental—and as frequently misunderstood—as phase difference. Simply put, it measures the offset in timing between two sinusoidal waves, expressed in degrees (°) or radians. For example, if two signals at 2.4 GHz are out of phase by 90°, one wave reaches its peak voltage exactly 104 picoseconds before the other. This tiny timing difference might seem insignificant, but it has major implications. In a typical 4-port directional coupler operating at 3 GHz, a phase error of just 10° between the coupled and output ports can introduce an amplitude imbalance of up to 1 dB, reducing power measurement accuracy by nearly 15%. Modern vector network analyzers (VNAs) can detect phase shifts as small as 0.1°, highlighting the critical need for precision. Understanding phase difference isn’t just academic—it’s essential for optimizing performance in systems like 5G base stations, where phase coherence across multiple antenna elements directly impacts beamforming efficiency and data throughput.

Phase difference quantifies the time shift between two periodic signals and is a core concept in analyzing how directional couplers behave. Unlike amplitude, which measures signal strength, phase describes the wave’s position in its cycle.

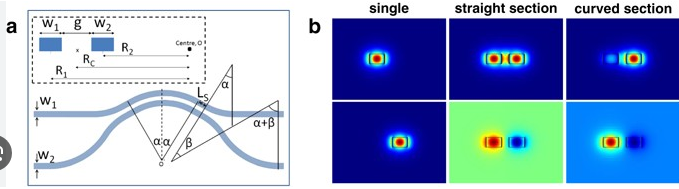

When an input signal enters a directional coupler, it splits into two paths: one going straight to the output port and another to the coupled port. Due to the physical layout and electrical properties of the coupler, the signal arriving at the coupled port is delayed relative to the output. This delay is what we refer to as the phase difference.

In a well-designed 20 dB coupler operating at 6 GHz, the phase difference between the output and coupled ports should ideally be 90° ± 3°. This quadrature relationship is intentional in many designs.

The phase difference isn’t constant; it varies with frequency. For instance, a coupler might have a phase difference of 85° at 1 GHz, but 92° at 2 GHz. This frequency-dependent change is called phase dispersion. If not accounted for, it can lead to measurement errors, especially in broadband applications spanning more than 500 MHz.

Engineers measure this parameter using a VNA, which compares the phase of the signals at two ports. The accuracy of this measurement depends heavily on calibration; even a slight miscalibration can add a systematic error of 2–5°. For a coupler with a specified phase balance of ±5°, ensuring measurement precision is non-negotiable.

How Directional Couplers Work

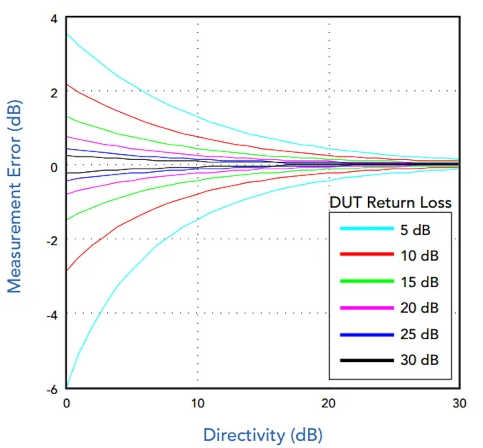

Directional couplers are fundamental components in RF systems, designed to sample a small portion of the signal traveling in one direction while ignoring the reverse. For instance, a common 20 dB coupler might divert only 1% of the forward power (e.g., 10 mW from a 1 W input) to the coupled port, with the remaining 99% passing to the output. This precise power splitting is frequency-dependent; a coupler rated for 2–4 GHz typically maintains its directivity—the ability to distinguish forward and reflected waves—above 25 dB across 90% of that band. Modern couplers can handle power levels from a few milliwatts up to several hundred watts, with insertion loss often below 0.3 dB. The physical length between ports in a microstrip coupler operating at 2.5 GHz is roughly 15 mm, a dimension directly tied to the wavelength. Understanding these mechanics is key to deploying couplers effectively in applications like antenna VSWR monitoring or transmitter output sampling, where accuracy directly impacts system performance and cost.

A directional coupler is a passive device that routes power based on the direction of signal flow. It typically has four ports: Input, Output, Coupled, and Isolated. When you send a signal into the Input port, most of it travels to the Output port, but a small, fixed percentage is “coupled” out to the Coupled port. The Isolated port, where reverse power should ideally be terminated, often has a built-in 50-ohm load.

The key to its operation lies in careful geometric design and electromagnetic coupling between transmission lines. In a microstrip coupler, two parallel traces are separated by a specific gap—often between 0.2 mm and 0.5 mm for a 50-ohm system at 3 GHz—to achieve the desired coupling factor. The coupled signal’s power level is determined by this physical gap and the length of the coupled region, which is usually designed to be one-quarter wavelength at the center frequency.

For example, a 30 dB coupler samples only 0.1% of the input power. If you input a 40 W signal, the coupled port provides just 0.04 W, while the output delivers approximately 39.96 W (assuming negligible loss).

Measuring Phase Accurately

Accurately measuring phase difference in directional couplers is a critical task that directly impacts system performance. For instance, in a 5G massive MIMO array operating at 3.5 GHz, a phase measurement error of just 5° between antenna elements can reduce beamforming gain by up to 15% and decrease cell edge throughput by approximately 20%. Modern vector network analyzers (VNAs) offer high-resolution phase measurement capabilities, typically with a precision of ±0.5° or better under calibrated conditions. However, achieving this level of accuracy requires careful attention to detail. Factors such as cable stability (phase drift < 0.05°/°C), connector repeatability (contributing up to 2° of error per reconnect), and calibration kit accuracy dominate the uncertainty budget. In production testing, a phase measurement tolerance of ±3° is common for components like couplers and phase shifters, but design validation often demands uncertainties below ±1°. Understanding and controlling these error sources is not optional—it’s essential for ensuring that systems perform as designed, especially in high-frequency applications where wavelength is short and margins are tight.

Achieving accurate phase measurements requires a systematic approach to minimize errors. The primary tool for this is a calibrated Vector Network Analyzer (VNA), which compares the phase of two signals. The most critical step is performing a full 2-port calibration at the plane of measurement, typically using a Short-Open-Load-Thru (SOLT) kit. A high-quality calibration can reduce systematic phase errors from over 10° to less than ±0.5°.

Even after calibration, several factors can degrade accuracy:

- Cable Flexibility: Phase stability is paramount. Semi-rigid cables exhibit minimal phase drift (< 0.1° over 1 hour), but flexible test cables can drift by more than 2° with a 5°C temperature change or movement. For best results, use phase-stable cables and minimize movement during testing.

- Connector Torque: The repeatability of coaxial connections is a major error source. A Type-N connector torqued to 8 in-lbs might show a phase variation of ±0.7° between connections, while an SMA connector torqued to 5 in-lbs can vary by up to ±1.5°. Always use a torque wrench for consistent connections.

- Signal-to-Noise Ratio (SNR): Low power levels increase phase uncertainty. For a measurement at 10 GHz, an SNR of 60 dB yields a phase noise floor of about ±0.1°, but an SNR of 40 dB can increase the uncertainty to ±1.5°. Ensure your signal power is sufficiently high, often between +5 to +10 dBm, without overdriving the receiver.

The measurement setup itself introduces electrical delay. For example, a 1-meter cable with a velocity factor of 0.66 adds approximately 11.5 nanoseconds of delay, equating to 1242° of phase shift at 3 GHz. This must be electrically nulled out using the VNA’s delay offset function to read the true phase difference of the device under test (DUT).

The following table compares the phase measurement uncertainty contributors for a mid-range and a high-performance VNA setup at 6 GHz:

| Uncertainty Contributor | Mid-Range VNA (e.g., 4 GHz) | High-Performance VNA (e.g., 26 GHz) |

|---|---|---|

| VNA System Accuracy (post-cal) | ±1.2° | ±0.3° |

| Calibration Kit Specified Uncertainty | ±1.5° | ±0.5° |

| Connector Repeatability (per mate) | ±1.8° | ±0.8° |

| Cable Stability (per 1°C change) | ±0.3° | ±0.1° |

| Total Estimated Uncertainty (RSS) | ±2.8° | ±1.0° |

Temperature control is often overlooked. A directional coupler’s phase response can drift by 0.02° to 0.1° per °C. For measurements requiring ±0.5° accuracy, the lab temperature must be stabilized within ±5°C of the calibration temperature. Always allow the DUT and test cables to acclimate for at least 30 minutes in a controlled environment.

For the highest accuracy, use the phase difference measurement function directly rather than calculating it from separate phase recordings. This method often uses a math trace that references one channel to another, reducing internal processing errors. Averaging 64 to 128 sweeps can further reduce random noise by a factor of 8 to 11, smoothing the reading to within ±0.1°.

Phase and Signal Strength

The relationship between phase and signal strength in directional couplers is not always direct, but it is critically important for system performance. A common misconception is that phase only affects timing, but it directly influences amplitude when signals combine. For instance, in a power combiner fed by two signals through separate couplers, a phase misalignment of just 10° between the two paths can cause a peak-to-null power variation of up to ±0.8 dB in the combined output. In a 4×4 MIMO system operating at 3.6 GHz, this translates to an effective 12% reduction in antenna array gain if left uncorrected. Modern couplers specify amplitude imbalance relative to phase; a typical 20 dB coupler might have a ±0.4 dB amplitude variation over a ±5° phase shift across its frequency band. This interaction is frequency-dependent: at 6 GHz, a 1° phase error might introduce only 0.05 dB of amplitude error, but at 28 GHz, the same 1° error can cause over 0.2 dB of amplitude uncertainty due to the shorter wavelength. Understanding this coupling is essential for accurate power management, efficient spectrum use, and minimizing distortion in high-frequency systems.

The phase relationship between the output and coupled ports of a directional coupler directly influences the amplitude of the resulting signal when these paths are used in systems that recombine power. This is because the total signal amplitude is the vector sum of the individual waves.

The key metric here is amplitude imbalance, which specifies how much the signal strength varies for a given phase difference. For a standard quadrature (90°) hybrid coupler, an ideal phase difference yields a perfect 3 dB power split between the two output ports. However, a phase error of ±8° can shift this split to 2.7 dB and 3.3 dB, an imbalance of ±0.3 dB.

This effect is magnified at higher frequencies. The following table illustrates how phase error translates to amplitude imbalance at different frequency bands for a coupler with a nominal 90° phase difference:

| Frequency Band | Phase Error | Resulting Amplitude Imbalance (approx.) | Impact on 64-QAM EVM |

|---|---|---|---|

| 2.4 GHz (Wi-Fi/Bluetooth) | ±5° | ±0.25 dB | Increase by ~0.8% |

| 3.5 GHz (5G n78) | ±5° | ±0.3 dB | Increase by ~1.2% |

| 28 GHz (5G mmWave) | ±5° | ±0.9 dB | Increase by ~3.5% |

The most significant impact is seen in beamforming arrays and balanced amplifiers. In an array with 32 antenna elements, a systematic phase error of 7° across all elements can reduce the effective isotropic radiated power (EIRP) by 15% and widen the main beam by 5%, reducing spatial selectivity.

Furthermore, phase-induced amplitude errors compound measurement uncertainty. When using the coupled port to monitor transmit power, a 2° phase shift between the main and coupled paths—perhaps due to temperature drift—can introduce a 0.1 dB error in the power measurement. For a base station transmitting 40 W, this represents a ±0.4 W measurement uncertainty.

The material properties of the coupler’s substrate also play a role. A substrate with a high thermal coefficient of dielectric constant, say 150 ppm/°C, can cause the electrical length to change with temperature. A 20°C temperature swing may induce a 3° phase shift, which subsequently manifests as a 0.15 dB change in coupled power amplitude, creating an inaccurate and drifting reference signal.

Common Mistakes to Avoid

A simple mistake, like using a calibration kit from a different connector series, can add a systematic phase error of 3° to 8° and degrade directivity by 10 dB. In a production test environment, failing to re-torque SMA connectors to the specified 5 in-lbs can cause phase measurements to vary by ±2° between consecutive tests, leading to a 15% yield loss on tight-tolerance components. Another common oversight is ignoring temperature effects; a coupler’s phase response can drift 0.1° per °C, meaning a 10°C shift in lab temperature between morning and afternoon can invalidate all measurements requiring ±1° accuracy. These are not minor issues—they directly impact product performance, project timelines, and cost. A single mischaracterized coupler in a satellite payload can result in months of diagnostic rework and potential revenue loss exceeding $50,000. Recognizing and avoiding these common pitfalls is essential for achieving reliable and repeatable results.

One of the most frequent errors is ignoring the impact of cable phase stability. Using standard flexible RF cables for phase measurements is a recipe for inconsistency. These cables can exhibit phase drift of over 5° with just a 30-degree bend or a 5°C temperature change. For any measurement requiring better than ±2° accuracy, invest in phase-stable or semi-rigid cables and minimize movement once the setup is configured.

Improper connector care is another major source of error. A dirty or damaged connector interface can easily introduce 1-2 dB of insertion loss and 4-6° of unpredictable phase shift. Each mating cycle on a worn connector increases measurement variance. Inspect connectors meticulously before use; a single particle of dust can be enough to skew results. Establish a strict maintenance schedule and clean connectors every 50-100 mating cycles.

Many engineers use an incorrect calibration method or kit. Using a 3.5 mm calibration kit to calibrate for an N-type connector interface will introduce a ±4° residual phase error. Always use a calibration kit that exactly matches the connector type and gender of your device under test. Furthermore, perform the calibration at the exact same reference plane where the DUT will be connected. Adding even 5 cm of extra cable after calibration can add 9° of phase error at 3 GHz.

Neglecting to allow for thermal equilibrium is a critical mistake. Components and test equipment require time to stabilize. Powering on a VNA and immediately calibrating and measuring can lead to a drift of 0.5° to 1.5° over the first 30 minutes. The best practice is to power on all equipment—including the DUT if possible—and allow 45 minutes for the entire system to stabilize at a constant lab temperature (23°C ±2°C is ideal) before beginning calibration.

A subtle but costly error is operating at incorrect power levels. Measuring a coupler’s phase response at -30 dBm will result in a poor signal-to-noise ratio, increasing phase measurement jitter to ±1.5°. Conversely, measuring a 5 W coupler at its full 47 dBm power rating without allowing for thermal expansion can cause its phase response to shift by 3° after 10 minutes of operation. Always check the recommended operating power and ensure your test signal is within the linear region of all components, typically between -5 dBm and +10 dBm for characterization.

Practical Measurement Tips

For instance, simply using a torque wrench to tighten SMA connectors to 8 in-lbs instead of hand-tightening can improve phase measurement repeatability from ±2.5° to ±0.8° at 6 GHz. Allowing your VNA and DUT to thermally stabilize for 45 minutes in a 23°C ±2°C environment can reduce thermal drift errors from ±1.2° to under ±0.3°. These small, practical steps have a greater impact on data integrity than the raw accuracy of your instrument. By focusing on methodical techniques, you can consistently achieve phase accuracy better than ±1°, even with mid-range equipment.

Start with a meticulous calibration. Use a calibration kit with connectors that exactly match your device under test (DUT). A mismatch (e.g., using a 3.5 mm kit for an N-type DUT) can leave a residual phase error of ±5°. Calibrate at the exact end of your test cables. After calibration, avoid moving the cables; a bend radius smaller than 5 cm can change the phase response by over 2°.

Cable management is critical. Label your test ports and cables to ensure you use the same port for the same measurement every time. This minimizes variability caused by slight differences in port match, which can account for ±0.5° of error. Use phase-stable cables for any measurement requiring better than ±2° accuracy. Keep cable lengths as short as possible; each additional 10 cm of cable adds roughly 1.7 ns of delay, which translates to 36° of phase shift at 6 GHz.

Control your environment. Perform measurements in a temperature-stable lab. The phase response of a typical coupler drifts about 0.1° per °C. A 5°C shift during a long test sequence can introduce a 0.5° error. Record the ambient temperature and humidity for each measurement session. For the highest precision, consider testing inside a temperature-controlled chamber set to 25°C.

| Parameter | Typical Mistake | Recommended Practice | Expected Improvement |

|---|---|---|---|

| Connector Torque | Hand-tightened (~3 in-lbs) | Torqued to spec (e.g., 8 in-lbs for SMA) | Repeatability improves from ±2.0° to ±0.8° |

| Sweep Time | Fast sweep (10 ms), no averaging | Medium sweep (100 ms), 16x averaging | Reduces phase noise from ±0.5° to ±0.1° |

| Signal Power | Too low (-30 dBm) or too high (+20 dBm) | Optimized for SNR (e.g., 0 to +10 dBm) | Minimizes jitter and DUT heating effects |

| Thermal Soak | Measure immediately after power-on | Wait 45 mins for system stabilization | Reduces drift from ±1.5° to ±0.3° |

| Test Frequency | Wide, sparse sweep (201 points) | Dense sweep over narrow band (1001 points) | Better reveals fine phase response details |

Optimize your VNA settings. Use a slow sweep speed and enable averaging (16 to 64 sweeps) to reduce random noise. This can lower the phase noise floor from ±0.4° to under ±0.1°. Set your IF bandwidth to 100 Hz for a good balance between speed and noise. Use a sufficient number of data points—at least 1001 points for a wideband sweep—to ensure you don’t miss narrow features in the phase response.

Verify your setup with a known standard. After calibration, measure a high-quality through line or phase reference. The phase measurement should be 0° ±0.5° for a through connection across your frequency band. Any significant deviation (e.g., > ±1°) indicates a problem with your calibration, cables, or connectors that must be investigated before measuring your DUT.